A magazine where the digital world meets the real world.

On the web

- Home

- Browse by date

- Browse by topic

- Enter the maze

- Follow our blog

- Follow us on Twitter

- Resources for teachers

- Subscribe

In print

What is cs4fn?

- About us

- Contact us

- Partners

- Privacy and cookies

- Copyright and contributions

- Links to other fun sites

- Complete our questionnaire, give us feedback

Search:

Password Strength

by Paul Curzon, Queen Mary University of London

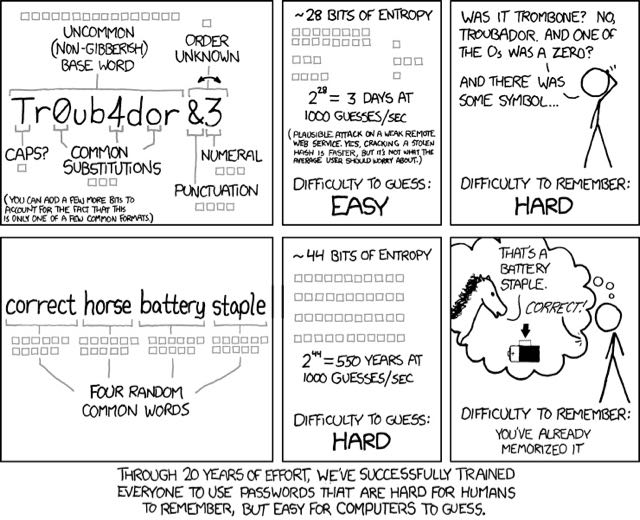

How do you decide whether a password is strong? Computer scientists have a mathematical way to do it. Based on an idea called information entropy it's part of "Information Theory", invented by electrical engineer Claude Shannon back in 1948. This XKCD cartoon for computer scientists uses this idea to compare two different password. Unless you understand information theory the detail is a bit mind blowing to work out what is going on though... so let's explain the computer science!

Entropy is based on the number of guesses someone would need to make trying all the possibilities for a password one at a time - doing what computer scientists call a brute force attack. Think about a PIN on a mobile phone or cash point. Suppose it was a single digit - there would be 10 possibilities to try. If 2-digit PINS were required then there are now 100 different possibilities. With the normal 4 digits you need 10,000 (10x10x10x10) guesses to be sure of getting it. Different symbol sets lead to more possibilities. If you had to use lower case letters instead of digits, there are 26 possibilities for length 1 so over 450,000 (26x26x26x26) guesses needed for the 4 letter password. If upper case letters are possible that goes up to more than 7 million (52x52x52x52) guesses. If you know they used a word though you don't have to try all the possibilities, just the words. There are only about 5000 of those, so far fewer guesses needed. So password strength depends on the number of symbols that could be used, but also whether the PIN or password was chosen randomly (words aren't a random sequence of letters!)

To make everything standard, Shannon used binary to do entropy calculations so assumed a symbol set of only 0 and 1. He then measured them in 'bits' needed to count all the guesses. Any other groups of symbols are converted to binary first. If a cashpoint only had buttons A, B, C and D for PINs, then to do the calculation you count those 4 options in binary: 00, 01,10,11 and see you need 2 bits to do it. Real cashpoints have 10 digits and need just over 3 bits to represent all of a 1 digit PIN. It's entropy would be just over 3. To count the possibilities for a 4-digit PIN you need just over 13 bits, so that is its entropy. The lower case alphabet needs just under 6 bits to store the number of possibilities, so entropy is about 6, and so on.

So entropy is not measured directly in terms of guesses (which would be very big numbers) but instead indirectly in terms of bits. If you determine the entropy to be 28 bits (as in the cartoon), that means the number of different possibilities to guess would fit in 28 bits if each guess was given its own unique binary code. 28 bits of binary can be used to represent over 268 million different things (2^28), so that is the number of guesses actually needed. It is a lot of guesses but not so many a computer couldn't try them all fairly quickly as the cartoon points out!

Where do those 28 bits come from? Well they assume the hacker is just trying to crack passwords that follow a common pattern people use (so are not that random). The hacker assumes the person did the following: Take a word; maybe make the first letter a capital; swap digits in place of some similar looking letters; and finally add a symbol and letter at the end. It follows the general advice for passwords, and looks random ... but is it?

How do we work out the entropy of a password invented that way? First, think about the base word the password is built around. They estimate it as 16 bits for an up to 9 letter word of lower-case letters, so are assuming there are 2^16 (i.e. 65,000) possible such base words. There are about 40,000 nine letter words in English, so that's an over estimate if you are assuming you know the length. Perhaps not if you assume things like fictional names and shorter words are possible.

As the pattern followed is that the first letter could be uppercase, that adds 1 more bit for the two guesses now needed for each word tried: check if it's upper-case, then check if it's lower-case. Similarly, as any letter 'o' might have been swapped for 0, and any 'a' for 4 (as people commonly do) this adds 3 more bits (assuming there are at most 3 such opportunities per word). Finally, we need 3 bits for the 9 possible digits and another 4 bits for the common punctuation characters added on the end. Another bit is added for the two possible orders of punctuation and digit. Add up all those bits and we have the 28 bits suggested in the cartoon (where bits are represented by little squares).

Now do a similar calculation for the other way of creating passwords suggested in the cartoon. If there are only about 2000 really common words a person might choose from, we need 11 bits per word. If we string 4 such words together completely at random (not following a recognisable phrase) we get the much larger entropy of 44 bits overall. More bits means harder to crack, so this password will be much, much harder than the first. It takes over 17 trillion guesses rather than 268 million.

The serious joke of the cartoon is that the rules we are told to follow leads to people creating passwords that are not very random at all, precisely because they have been created following rules. That means they are easy to crack (but still hard to remember). If instead you used the 4 longish and unconnected word method which doesn't obey any of the rules we are told to follow, you actually get a password that is much easier to remember (if you turn it into a surreal picture), but harder to crack because it actually has more randomness in it! That is the real lesson. How ever you create a password, it has to have lots of randomness for it to be strong. Entropy gives you a way to check how well you have done.